The Hidden Foundation of Data Science

Written by Mario Novkovic and Julia Paris

The Invisible Architecture

When life science, chemistry, and pharmaceutical companies commit to digital transformation, the focus inevitably turns to advanced analytics, actionable insights, and artificial intelligence. Many of those initiatives are formed for this purpose: Artificial Intelligence (AI)-driven drug discovery, optimization and digitalization of clinical trials, process and testing automation, smart operations and manufacturing, design of experiments, predictive quality models and intelligent pharmacovigilance.

However, there is a major issue as many of these initiatives either do not bring the expected value or fail. This is not because the AI algorithms are not sophisticated enough, nor because the data scientists and engineers are not talented enough. They ultimately fail because over time nobody paid closer attention to the foundation: the invisible architecture beneath the complex digital landscape.

The foundation is Master Data.

Master data are the least glamorous part of any digital transformation strategy. It does not generate headlines, it is not featured in any annual report, nor will it be announced at investor meetings.

Yet it has consistently been one of the most critical factors determining whether the organization’s data science investments deliver value or disappointment.

What exactly is Master Data?

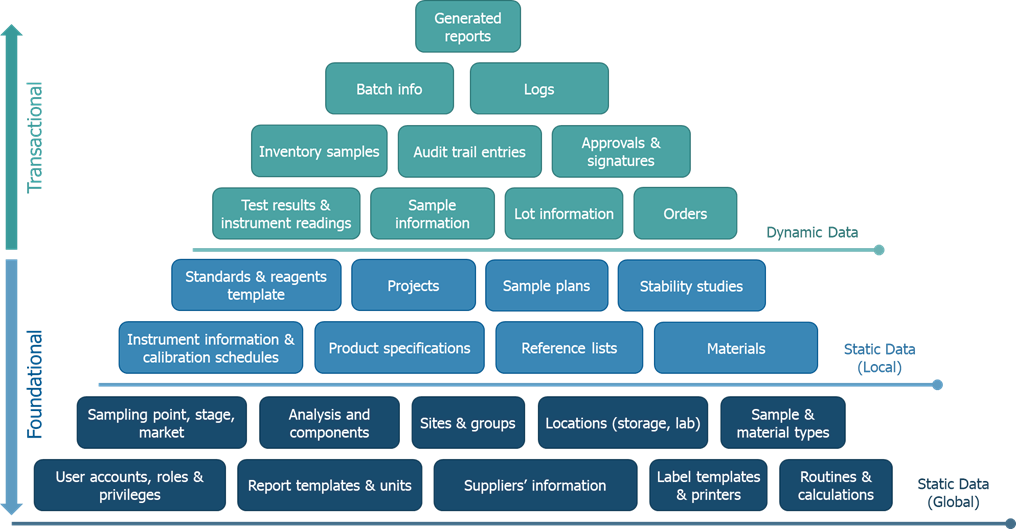

Before we can understand why master data matters so profoundly, we need to understand what it actually is. With most digital system implementations, there are two types of data that are relevant: dynamic (transactional) data and static (foundational) data.

Dynamic Data (the “River”)

Dynamic data are transactional: continuously created in lab operations, from analysts testing samples, operators running equipment to quality managers reviewing batches.

Here are some examples of dynamic data that is constantly generated throughout the data lifecycle:

- Test results from laboratories

- Batch records from manufacturing

- Purchase orders and compound usage logs

- Quality measurements throughout production

- Stability data over time

- Reports generated by users

- Audit trail records

This is the type of data that data scientists want to analyze, the operational history containing a wealth of patterns and insights.

Static Data (the “Riverbed”)

Static data are foundational: includes both master data (describes core business entities) and configuration data (defines how the system is set up). It is specified initially and changes infrequently over time. Static data act as a template, defining the core business entities and structure for the system, as well as providing the framework for dynamic data to be populated. Some examples of static data include:

- Product specifications that define acceptable quality limits and ranges

- Test methods that describe how analyses are performed

- Material definitions that identify substances with standardized codes

- Equipment registries that document assets

- Site and location hierarchies that organize the infrastructure

- User management of accounts, roles and privileges that define access controls

- Templates for the entire organization

Dynamic data cannot exist without static data. A test result cannot be recorded if the test method does not exist, a batch cannot be manufactured if the product specification is not defined, and a material cannot be labelled if the material code is not established. Hence, static data serve as a foundation for the transactional dynamic data that is created throughout the data lifecycle.

Furthermore, metadata (“data about data”) provide additional context, structure, and information about the data. Metadata are stored together with the relevant data and can describe both static and dynamic data. For example, when a user enters a test result, the result itself is dynamic data, but the information about who entered it and when is metadata. When an instrument is registered in the system, the instrument itself is static data, but the information about when it was last calibrated or who used it the last time is metadata. When a product specification is updated, the specification itself is static data, but the version number and the information about what changed, who approved it and when is metadata. Taken together, metadata would represent the “Map of the River”, that does not influence the river itself nor the riverbed, but without which it would be hard to trust or navigate.

Keep in mind that digital systems are living systems. Although static data serves as a fixed reference, it may still require updates over time. These updates depend on factors such as the product lifecycle, documentation changes, and evolving business requirements. Any changes must follow master data management and change management procedures. This is why good master data management practices are essential.

When updating any static data, a risk impact assessment must be carried out to fully understand the scope of the change. This is particularly important because static data are often interconnected, i.e. an update to a test method may impact several products simultaneously. Without a proper assessment, unintended changes can propagate across the system, generating incorrect dynamic data with potentially serious operational and compliance consequences. This structured approach to change management ensures that all dependencies are identified and that the full extent of any modification is controlled before it is executed.

Various examples of static and dynamic data for a Laboratory Information Management System (LIMS) can be found in the following figure 1.

A clear separation between local and global static data should be defined. Some records, such as analyses, may fall under the responsibility of either the local or global team depending on the data governance model. Defining clear ownership boundaries is key to avoiding confusion and ensuring consistency. The globally managed data are mainly related to the system configuration, such as user accounts, security roles and permissions, units, workflows, report templates, calculation rules, business logic, etc.

Depending on the size of the company and the number of assets needed in the system, static data might not require global and local concepts. Crucial decisions regarding the overall data governance should be carefully considered during the initial design phase.

Finally, if the static data are inconsistent, fragmented or poorly structured, then all the generated dynamic data will inherit those flaws. This is akin to building a house on a foundation made of quicksand and the reason why many data science initiatives fail.

The Tale of Three Sites

Let us illustrate with a story that plays out in pharmaceutical companies quite often.

The Goal

A global pharmaceutical manufacturer decides to implement predictive analytics for their flagship antibiotic. The vision is promising: use machine learning to predict batch quality based on process parameters, reducing testing burden, and accelerating product release.

All the pre-requisites to start the project are met:

- Three manufacturing sites producing the same compound

- Years of historical batch data

- Modern IT infrastructure

- A team of excellent data scientists

The project kicks off to a great start.

The Reality

Six months into the project, the data science team is struggling, not with the AI algorithms, but with alignment across other teams.

- Europe site: they have a dissolution test with a naming convention “DISS_UV_A”. The product specification limit is defined as “80% in 30 minutes”. Equipment is identified by a unique ID. Temperature is logged in Celsius. Sample lot numbers follow the format DD-MM-YY-###.

- US site: they have the same dissolution test, but it is called “UV_DISSOLUTION_001”. The specification is documented as “>=80%, 30 min.” Equipment naming is based on SAP codes. Temperature is logged in Fahrenheit. Sample lot numbers use format MMM-DD-YYYY-###.

- Asia site: they have another dissolution test called “APAC_DISSO_STD_2”. The product specification indicates “80-100%”, while a separate method specification document mentions the “30 min” timepoint. Equipment identifiers are based on a third-party inventory management system. Temperature units vary between instruments. Sample lots follow the convention YYYY/MM/DD-###.

It is the same test, the same product, the same company, but three completely different data conventions.

The Consequence

The data scientists are able to combine the datasets, but each site requires significant custom data transformation and standardization work. Assumptions are made, workarounds are created, risks are accepted, and the exceptions pile up in the data. Now the AI model that worked perfectly during the pilot phase on the European site data produces nonsensical predictions for the other sites.

After a year, the project is still ongoing, but at a significantly higher cost and with much longer timelines than initially planned. What was meant to be a streamlined AI initiative has turned into a complex data remediation effort. Consequently, this is not due to the goal being unrealistic, the AI model not fit for purpose nor the lack of human talent, but because the master data foundation was never properly assessed and built.

The Lesson

It is not a data science problem, it is a master data problem. No algorithm, however advanced, can compensate for a poorly built foundation.

The company spent hundreds of millions [OH9.1][MNO9.2]on technology, infrastructure and data science talent, but failed to invest hundreds of thousands in standardizing the master data first.

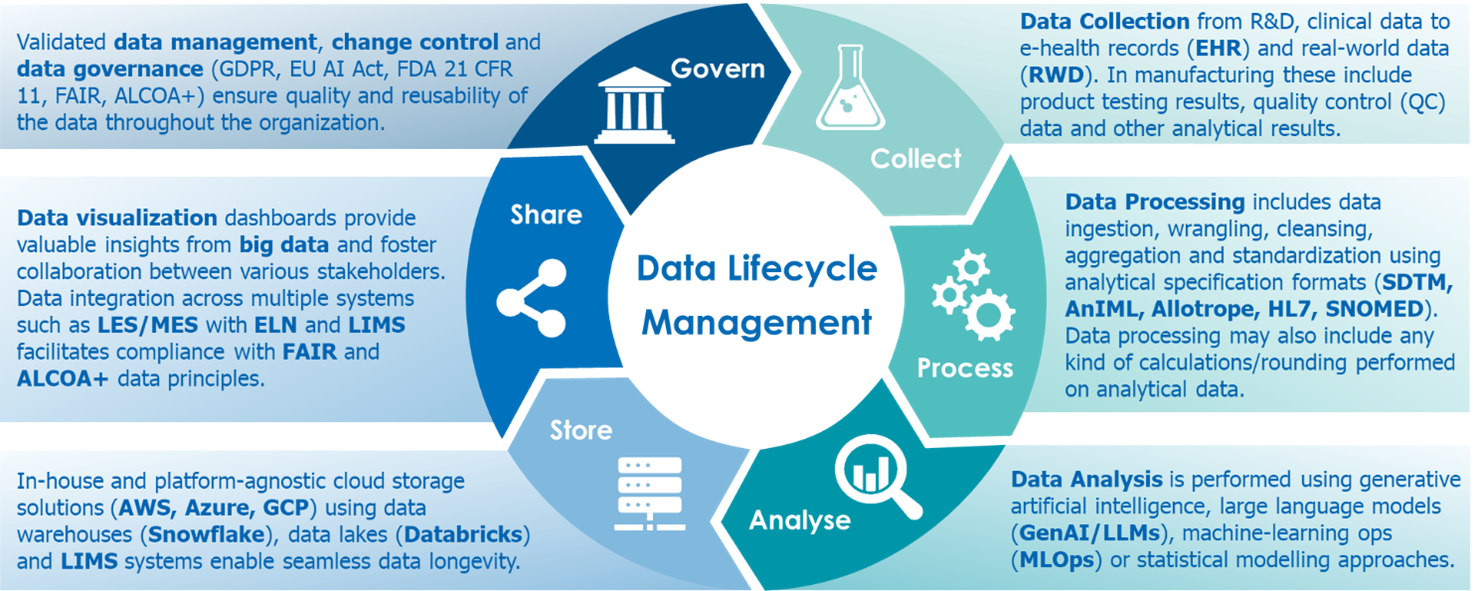

Data Lifecycle Management

Therefore, one of the most important prerequisites for data science is having good data, namely clean, standardized and structured data. This requires a combination of three pillars: a proper master data design/concept, a clear governance framework, and a disciplined change management process. Together, these ensure that the data are clean, standardized, structured, and harmonized across systems and sites. This approach avoids the infamous “garbage in, garbage out” situation, where poor quality data input leads to poor quality and erroneous data output.

Data collection and processing are often underestimated first steps in any data science initiative. A substantial amount of time needs to be invested in consolidating and standardizing data collected from multiple sources in an unstructured format[OH10.1][MNO10.2].

The foundation starts with a master data design concept, a guideline produced at the start of any systems implementation project that defines how master data should be structured, named, categorized, and governed across the organization. It establishes clear boundaries between globally and locally managed master data, data standards, formats, and naming conventions, and ensures that all departments and sites work with the same data structure. Moreover, the design concept will improve data consistency across systems, thus enabling scalability to multiple sites and increased operational efficiency by reducing the amount of manual data transformations, re-testing and troubleshooting.

A critical part of this design is finding the optimal configuration setup that enables the easiest and most efficient change management. This involves carefully determining at which level each data field should be configured, ensuring that the structure minimizes redundancy, avoids unnecessary duplication, and allows future changes to be applied consistently across all related entities, significantly reducing maintenance effort, limiting the risk of inconsistencies, and making change management more predictable and controlled.

Once the design is established, data cleansing and harmonization become the next essential steps, where incorrect, invalid, duplicate, or missing data are systematically identified and corrected. Good standardization practices improve interoperability, enabling easier integration between systems such as LIMS, Electronic Lab Notebook (ELN), Manufacturing Execution System (MES), Laboratory Execution System (LES), Enterprise Resource Planning (ERP) and Customer Relationship Management (CRM), and greatly reducing costs associated with new system implementations. Data cleansing in the operational phase will ensure that low-quality data does not accumulate over time, and that data maintenance does not become a long-term burden.

Taken together, these data management principles ensure that data are clean, accurate and consistent across systems and sites. Therefore, incorporating a well-designed master data concept, strong governance and disciplined change management ensures that downstream AI-powered analytics provide a solid foundation to deliver meaningful results.

Conclusion: the Foundation nobody sees

Master data are the core infrastructure of any digitalization effort and foundation for all business processes that rely on interconnected digital systems. Often times organizations overlook or underestimate the foundation, leading to chaotic master data that cannot be reliably and efficiently used for sophisticated data science. Only when processes and initiatives start failing, do the master data get more dedicated attention.

It is never too late to build this foundation or even improve the existing one if necessary. Whether this is implementing a new LIMS or ELN system, integrating an MES into the digital landscape, transitioning from paper-based to fully digitalized laboratories or deploying AI models to perform advanced analytics, investing in master data quality (the invisible) from the beginning will have long-term benefits and cost savings (the visible).

How can wega help

At wega Informatik AG, we have spent over 30 years supporting various life science, chemistry and pharmaceutical organizations in building their digital IT landscapes to enable both regulatory compliance and advanced analytics.

Our expertise covers the full data and systems lifecycle, supporting organizations at every stage, ensuring that the right foundations are built from the start and that data continues to deliver value over time. We offer the following related services[OH11.1][MNO11.2]:

- Selection and implementation of digital systems such as LIMS, ELN, MES and LES

- Design of solid master data foundations, governance frameworks and data strategy

- Definition of change management processes

- Harmonization and data cleansing across sites and systems

- Integration of multiple platforms into a coherent digital landscape

- Collection and processing of raw data into structured, analytics-ready formats

- Analysis and visualization of data through dashboards and reports

Ready to assess your Foundation?